Restore balance and reclaim personal data

1. The major actors – digital corporations and governments – need a haystack to find a needle.[i]

(1) Create and adapt models with inference engine and rules;

(2) Apply the model to data and match individuals to groups;

(3) Take actions based on the matching, observe the results and tune the model.[ii] The more data, the better the model.

2. The old school design approach to controlling this surveillance is to ask questions like: What are the rules? What are the consent points? Where are consents held? What are the defaults (opt-in or opt-out)? What are the obligations to the individual? How are those obligations met and monitored? How are obligations passed between actors? Can we regulate personal data markets? Should controls be centralized or distributed? What are the incentives? How do we resource enforcement?

3. This old school design will not work. The solution cannot be designed from within the frame of reference of the problem. Governments and digital corporations are committed to the current operating model – institutions try to preserve the problem to which they are the solution. Accepting the parameters of data surveillance legitimizes the relationship between the surveiller and the surveillee.[iii]

4. The global personal data ecosystem must develop homeostatic controls to absorb the variety of the system. Dynamic equilibrium must balance the interests of the different actors using transparency, feedback loops, and intrinsic regulators.[iv]

5. An effective future for the personal data ecosystem must manage this variety at a global level, cope with complexity and ambiguity, and be simple and easy to understand. It must be designed for a future world and recognize that the rate of technological change is exponential, which is why it cannot use old school design. In the global village there are only Pulchinella’s secrets[v].

6. There are no legal, political, economic or social levers that can control the data appetites of governments and corporations in the post-digital world.[vi] Once you eliminate the impossible, whatever remains, no matter how improbable, must be the truth.

7. The only option to achieve control is by using the technology to rebalance the asymmetry – similar to the coveillance idea proposed by Kevin Kelly.[vii] The technology must provide a facility for the surveillee to retrieve all information about their personal data including what has been collected, who has accessed the data, what data has been linked, and what inferences have been made.

8. Personal data must be created with the ability to annotate and transmit information about what has happened to it. The annotations must be embedded in tamper-proof technology within the global personal data ecosystem, and the internet of things will need to manage these annotations.

9. There are many questions that must be addressed in imagining this future – political economy, policy, leadership, engineering and technology – including:

· What is the ethical foundation of the post-digital social contract? Why is it important and what are the underlying values?

· Who has the interest, the insight and the energy to create the post-digital social contract? Where will leadership come from?

· How can legitimate government espionage activities operate effectively in secret while preserving the values of the post-digital social contract?

· What is the economic impact of the post-digital social contract? What happens to competitive advantage if there is full transparency of the personal data ecosystem?

· Is it possible? Can technology attach persistent transmitters to individual items of personal data?

10. I would like to think it is possible. The technology needs to be network-based and decentralized while maintaining trust and confidence. I have identified two areas where similar concepts are implemented in different domains – the blockchain and Distributed Object Numbering – which gives me some confidence that there is a technology that could achieve the rebalancing of information asymmetry.

· The blockchain algorithm is currently applied in many digital currencies, of which Bitcoins are the best known. But some consider that the underlying technology could be a disruptive force in many other sectors – by creating a network of trust from untrusted components.[viii]

· The Digital Object Architecture was designed to enable all types of information to be managed over very long time frames, and has been defined in ITU standard X.1255 - a framework for discovery of identity.[ix]

11. The future of the global personal data ecosystem need serious systems thinking, using expertise from a range of disciplines: lawyers & public policy analysts, commercial marketers & financiers, geeks & hackers, intellectuals, international governance specialists, privacy advocates, piracy advocates and data scientists.

Series first published March 2015

Series first published March 2015

[ii] The model for placing people on no-fly lists is described in a 166 page manual analyzed by the Intercept ; more than 40% of the people on the list have no affiliation to a recognized terrorist group.

[iii] The idea that institutions become dedicated to the problem they set out to solve and so perpetuate the problem has been named (the Shirky Principle). As an aside, there are no English words for either of the 2 parties involved in surveillance.

[iv] These terms are taken from cybernetics; information on cybernetics can be found in An Introduction to Cybernetics (1956) by W Ross Ashby where he describes the Law of Requisite Variety, and in Brain of the Firm (1972) and Platform for Change (1975) by Stafford Beer, where he describes the Viable System Model.

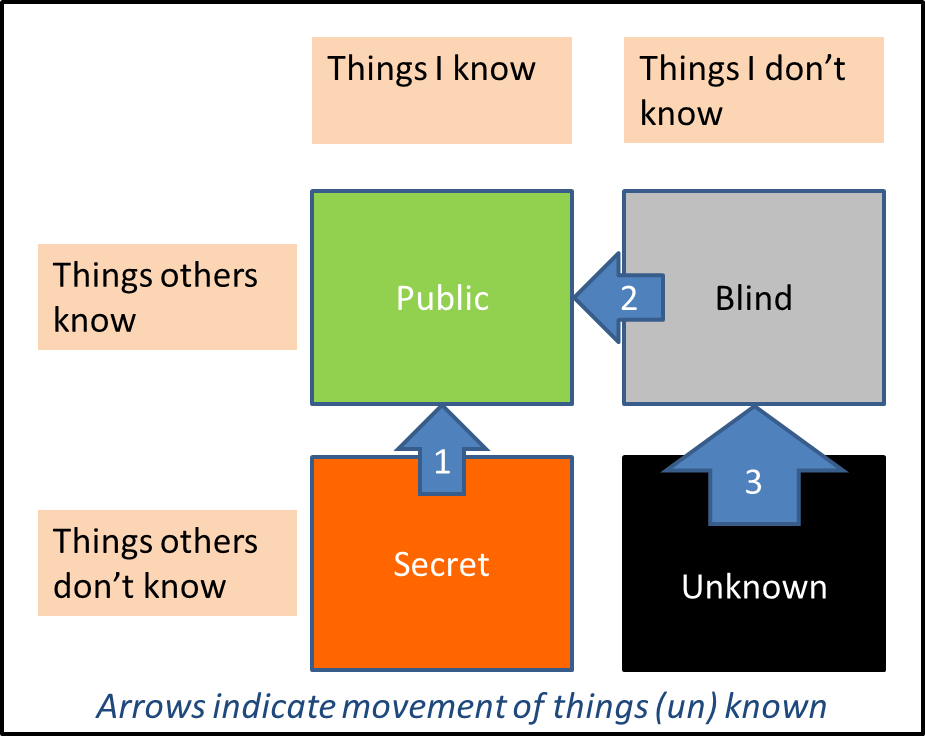

[v] The idea that there are no secrets in the village was a central theme of Italian “commedia dell’arte” in the 16th century. Pulchinella Revisited explains how to derive four laws of secrecy in the information society.

[vi] As Evgeny Morozov says at the end of this long article “the ultimate battle lines are clear. It’s a question of whether all these sensors, filters, profiles and algorithms can be used by citizens and communities for some kind of emancipation from bureaucracies and companies” He suggests, in my view unrealistically, that there is an option for social control of the big data stores.

[vii] “How can we have a world in which we are all watching each other, and everybody feels happy?”- a conversation.

[viii] For more on the implications of Blockchain see

Blockchain and decentralization hold big implications for society and How blockchain could power an alternate internet

Blockchain and decentralization hold big implications for society and How blockchain could power an alternate internet

[ix] For information on Digital Object Architecture refer to

Corporation for National Research Initiatives (CNRI) and Digital Object Numbering Authority

Corporation for National Research Initiatives (CNRI) and Digital Object Numbering Authority